What is Data Aggregation?

Data aggregation is the structured process of collecting, combining, and summarizing raw data from multiple sources into aligned, analyzable formats. This article explores its importance in SRE, DevOps, and incident management workflows, especially as observability and AI-driven operations become the norm in 2025.

Definition & technical foundations

Data aggregation involves compiling raw data from various sources: databases, logs, telemetry, metrics, and transforming it into summary forms such as counts, sums, averages, minima, maxima, or other aggregate statistics. Technically, this often occurs via aggregate functions (SUM, COUNT, AVG, MAX, MIN, MEDIAN, etc.) in databases or query engines.

Beyond simple summaries, modern aggregation emphasizes schema alignment, timestamp normalization, deduplication, and lineage tracking to ensure aggregated datasets are trustworthy and query-ready. Workflows typically include:

- Ingestion – pulling data from diverse sources (e.g. APIs, log streams).

- Normalization – aligning field names, formats, timestamps.

- Deduplication – eliminating redundant records.

- Grouping and summarizing – collapsing data into meaningful aggregates.

- Validation and lineage – enforcing schema integrity and traceability.

Use cases in SRE, DevOps & incident management

Observability & telemetry aggregation

SRE teams rely on aggregated telemetry, which combines metrics, logs, and traces, to construct observability dashboards. Data aggregation enables trend analysis, anomaly detection, and root-cause insights by summarizing high-cardinality telemetry into digestible forms.

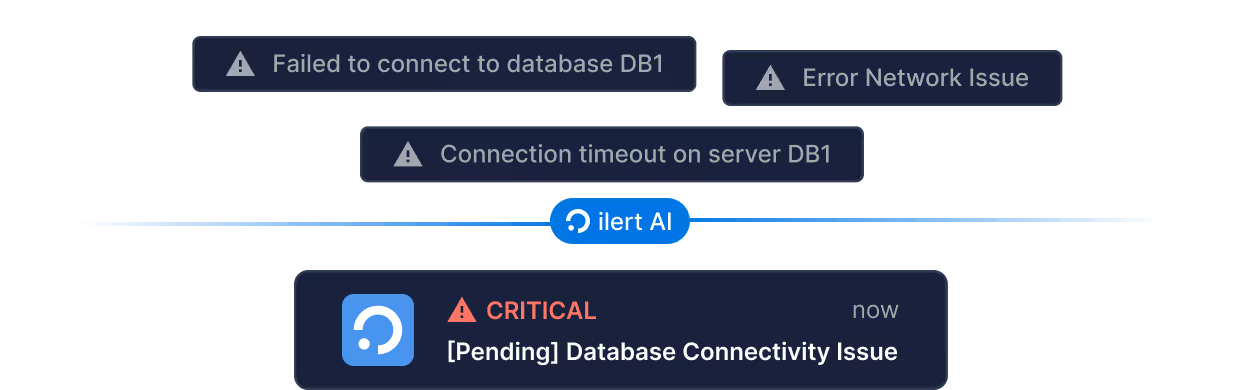

Alert deduplication & noise reduction

In modern incident management systems like ilert, sophisticated aggregation helps consolidate hundreds or thousands of events – particularly during cascading failures – into a small number of meaningful alerts, preventing alert fatigue and improving responder efficiency.

AI-driven incident detection

AIOps platforms ingest and aggregate data at scale to detect anomalies, predict incidents, and even initiate automated remediation. Future-ready AIOps frameworks unify data across sources and apply machine learning for incident lifecycle management.

Real-time vs batch aggregation in modern observability

Data aggregation can be performed in two primary modes: batch and real-time. Batch aggregation processes data at scheduled intervals – typically hourly or nightly – to generate reports, business intelligence dashboards, or SLA summaries. It is particularly useful for retrospective analysis and audit trails, where stability and consistency are essential. In contrast, real-time (streaming) aggregation continuously processes data as it arrives, enabling near-instant insights. This mode is critical for live dashboards, anomaly detection, and auto-triggered workflows that support rapid incident response in DevOps environments. In practice, many organizations adopt a hybrid approach, using batch aggregation for long-term analytics and compliance, while relying on real-time aggregation for operational monitoring and incident response.

2025 trends shaping data aggregation in incident response

AI and machine learning are revolutionizing incident response by enabling predictive analytics, automated anomaly detection, and intelligent root cause identification. This shift allows SRE and DevOps teams to move from reactive firefighting to proactive resilience engineering, where data aggregation plays an important role in supporting these AI-powered decisions and workflows.

The growing adoption of open standards such as OpenTelemetry (OTEL) is simplifying the process of aggregating data across different telemetry types: metrics, logs, and traces. By unifying schema definitions and promoting vendor neutrality, OTEL facilitates the creation of consistent, interoperable observability pipelines that are easier to maintain and extend across distributed systems.

Another key trend is the convergence of observability and security. Modern incident response workflows increasingly integrate operational and security data to create a unified incident timeline. Aggregating telemetry from both domains enhances threat detection and enables a more complete and contextual understanding of incidents, improving the quality and speed of mitigation efforts.

Best practices for engineers

- Enforce schema and versioning – Prevent misaligned aggregation logic by strictly defining and versioning schemas.

- Ensure traceability – Maintain data lineage so every aggregate links back to its sources for audit and debugging.

- Combine batch and real-time – Balance stability with responsiveness.

- Embed AI where useful – Leverage anomaly detection and predictive aggregation to uncover trends and outliers early.

- Adopt open standards – Using formats like OTEL ensures scalable, interoperable aggregation across tools.

ilert Observability integrations

Modern observability platforms such as Prometheus, Datadog, Grafana, New Relic, AWS CloudWatch, and Elastic rely heavily on data aggregation to transform raw telemetry into usable insights. Metrics are aggregated into trends, logs are summarized into patterns, and traces are collapsed into end-to-end service maps. While these tools excel at collecting and processing massive event streams, they often generate overlapping or redundant alerts during incidents.

This is where ilert adds value. By integrating directly with these observability tools, ilert applies an additional layer of intelligent event aggregation and correlation. Instead of passing every signal downstream, ilert consolidates related events into a single, actionable alert.

Conclusions

Data aggregation is a foundational technique in modern IT operations – transforming raw telemetry and events into structured insights that empower SRE and DevOps teams. As systems grow more complex, effective aggregation – schema-enforced, real-time, AI-enhanced, and security-aware – has become indispensable to incident response and operational resilience in 2025.